#SciFund round 4 has concluded. I’m kind of excited to crunch the numbers to see how this round did in comparison. I’d already compared the goals of round 4 to the previous three here.

The total amount of money committed across all 23 projects in round 4 was $55,272, bringing our total across all four rounds of the #SciFund chellenge to $307, 825.

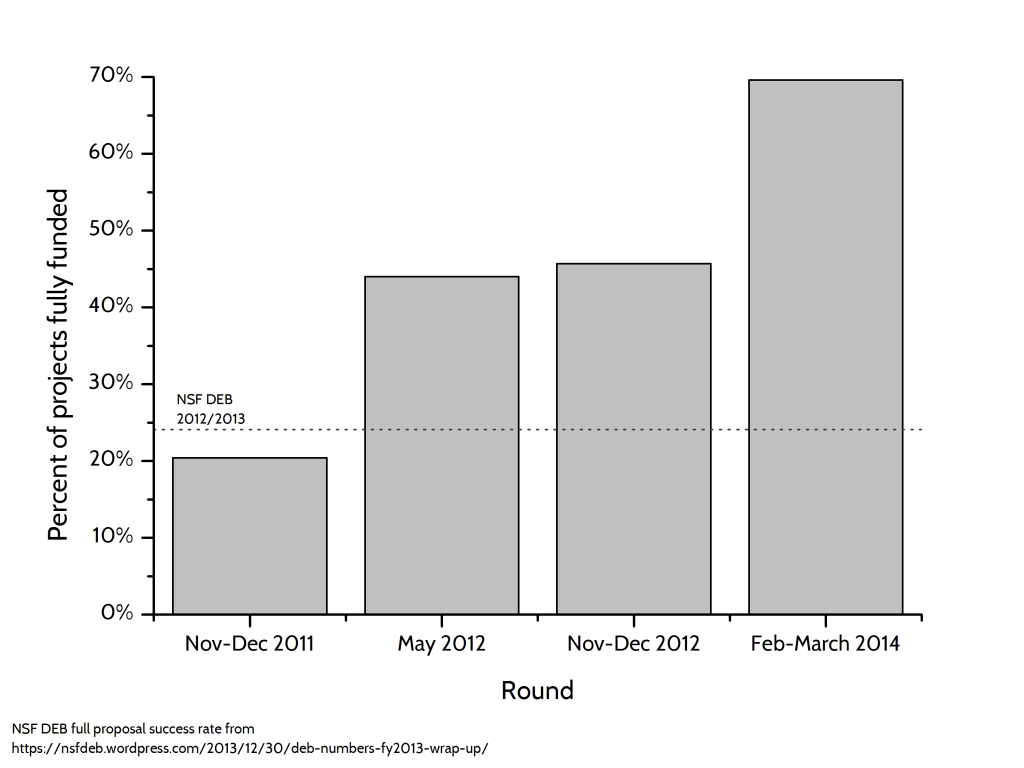

Without a doubt, this is my favourite graph (click to enlarge):

We saw a big jump in the percent of projects completed. This is wonderful. This bodes well for some kinds of research projects to be supported through crowdfunding fairly consistently. A lot of the projects were ecologically oriented, so the full proposal success rates from NSF’s Division of Environmental Biology (dashed line) are a way to compare crowdfunding to traditional federal funding.

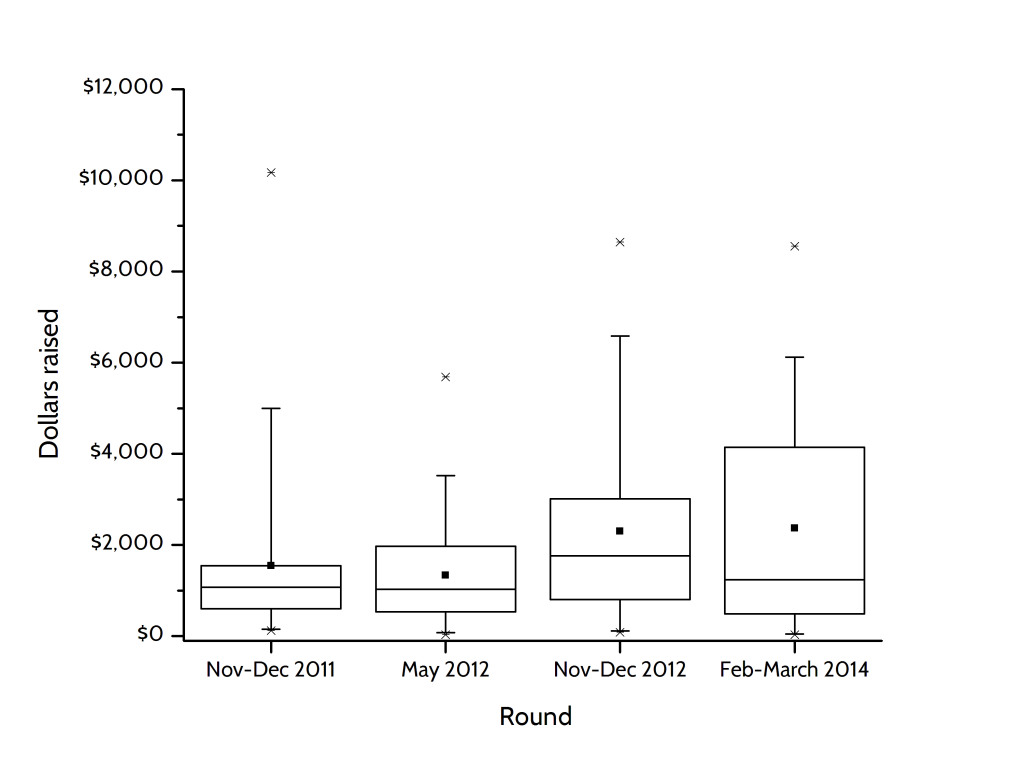

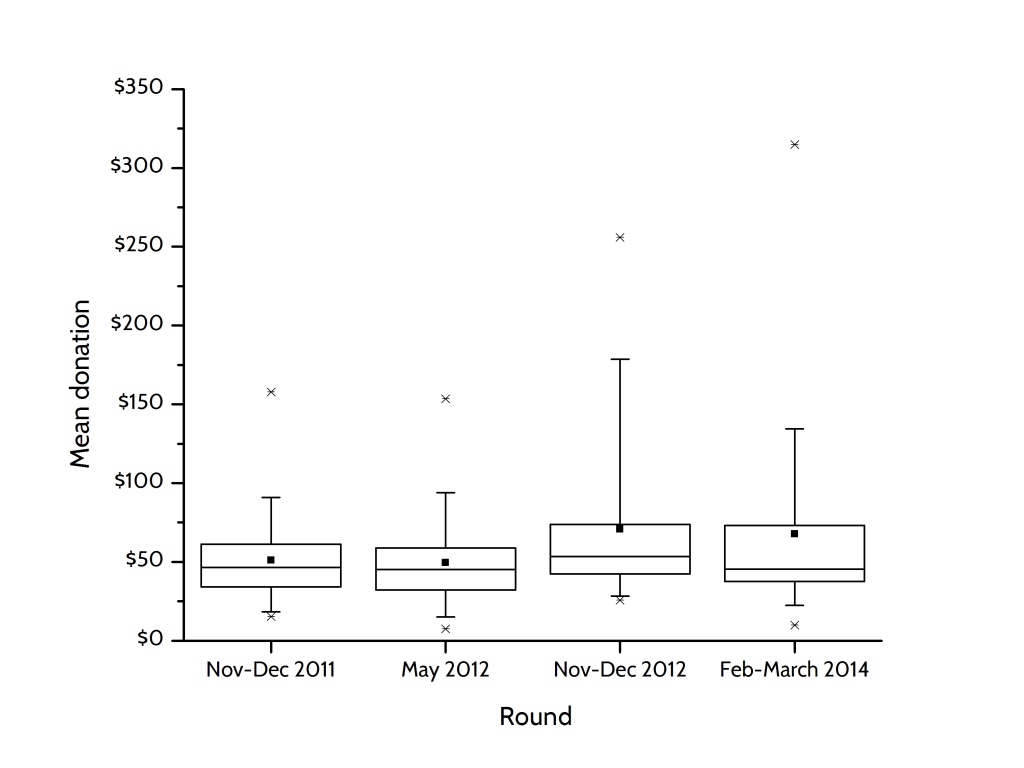

We still have to work on the dollars value of the individual projects (shown below), which is still low compared to federal grants. (Reminder: Dot is average, horizontal line is median, box is 50% of data, whiskers are 95% of data, stars are minimum and maximum.) Still, while I am disappointed in the amount, I am very happy with the trend. We are seeing steady growth, particularly in the top half of projects.

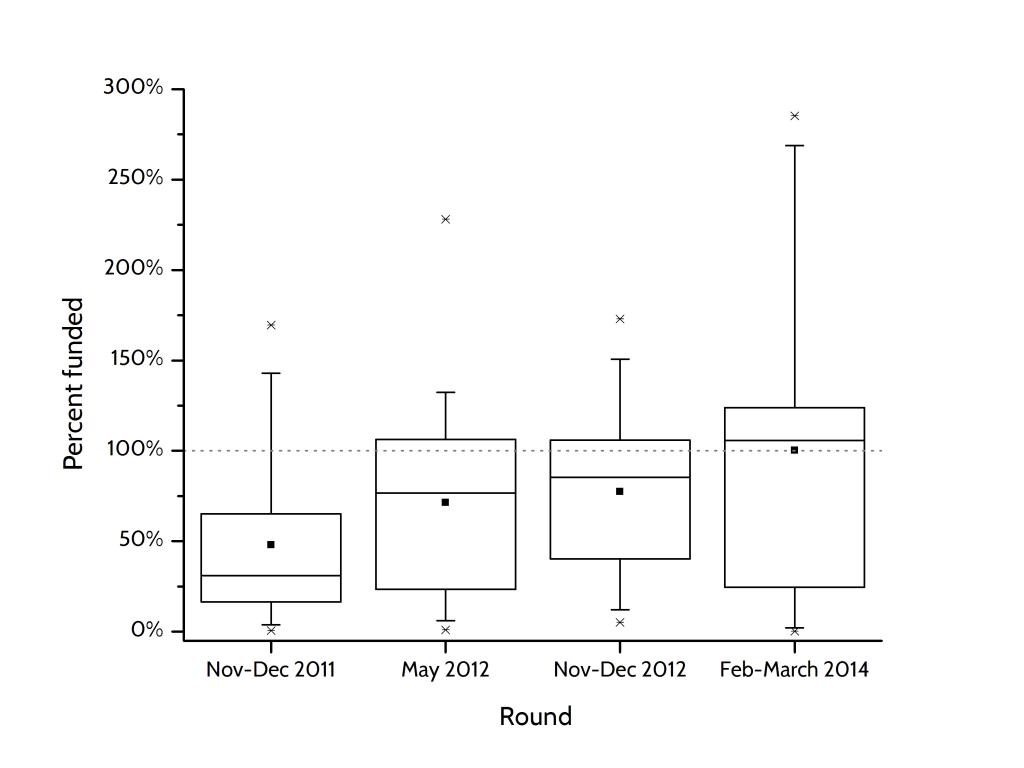

The growth is also apparent when we look at how close projects got to meeting their goal (indicated by dashed line). The average in this round of #Scifund was… 100%, or fully funded! Also note that this round saw the greatest overachievement we’ve ever had, with one project raising almost three times its project goal. I’ll discuss this particular rousing success in a later blog post.

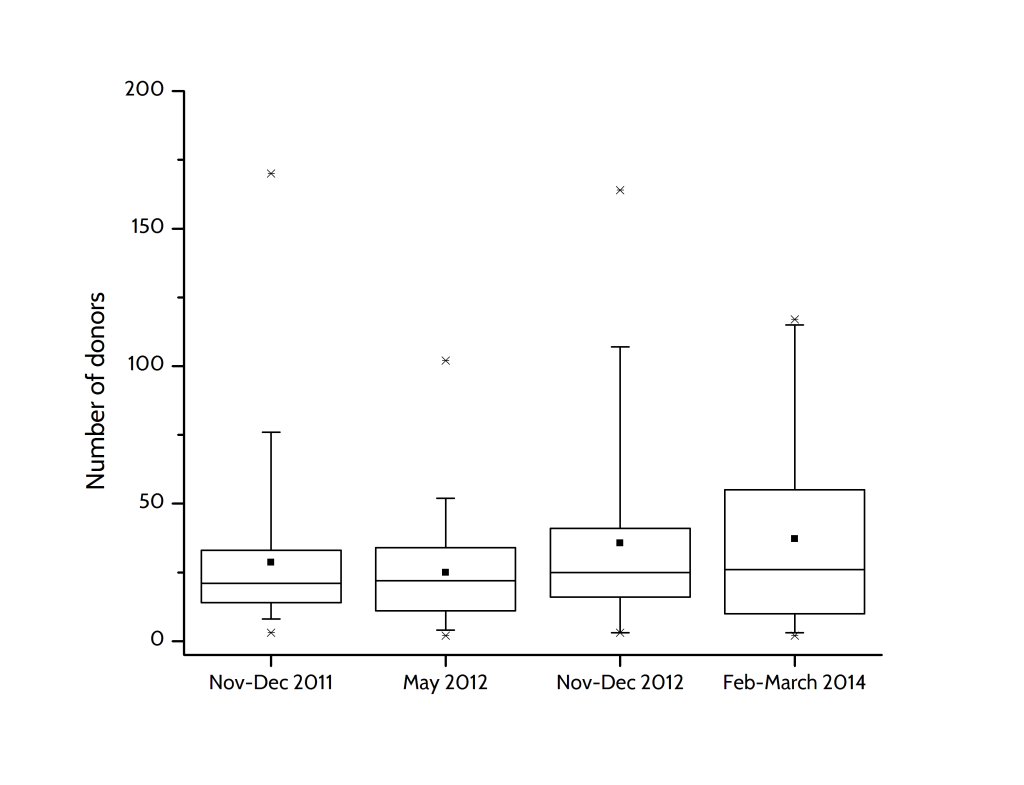

In previous rounds, we’ve seen that the amount that people give tends to be pretty constant, meaning success is all about the number of donors you have. This trend continues, as we see the average donation is about the same as round 3, or even a little lower:

While the number of donors each project is attracting is, as expected, up a little bit from previous rounds.

One of the slight surprises for me is how consistent this round in with the previous three. This surprised me because we switched platforms (from RocketHub to Experiment). These are two very different platforms, with different funding schemes (especially in how they deal with unfunded projects), and presumably different audiences. And yet the net outcome was not dramatically different.

If you want to play with the data yourself (and see a few measures I haven’t discussed here yet), they are deposited over at figShare.